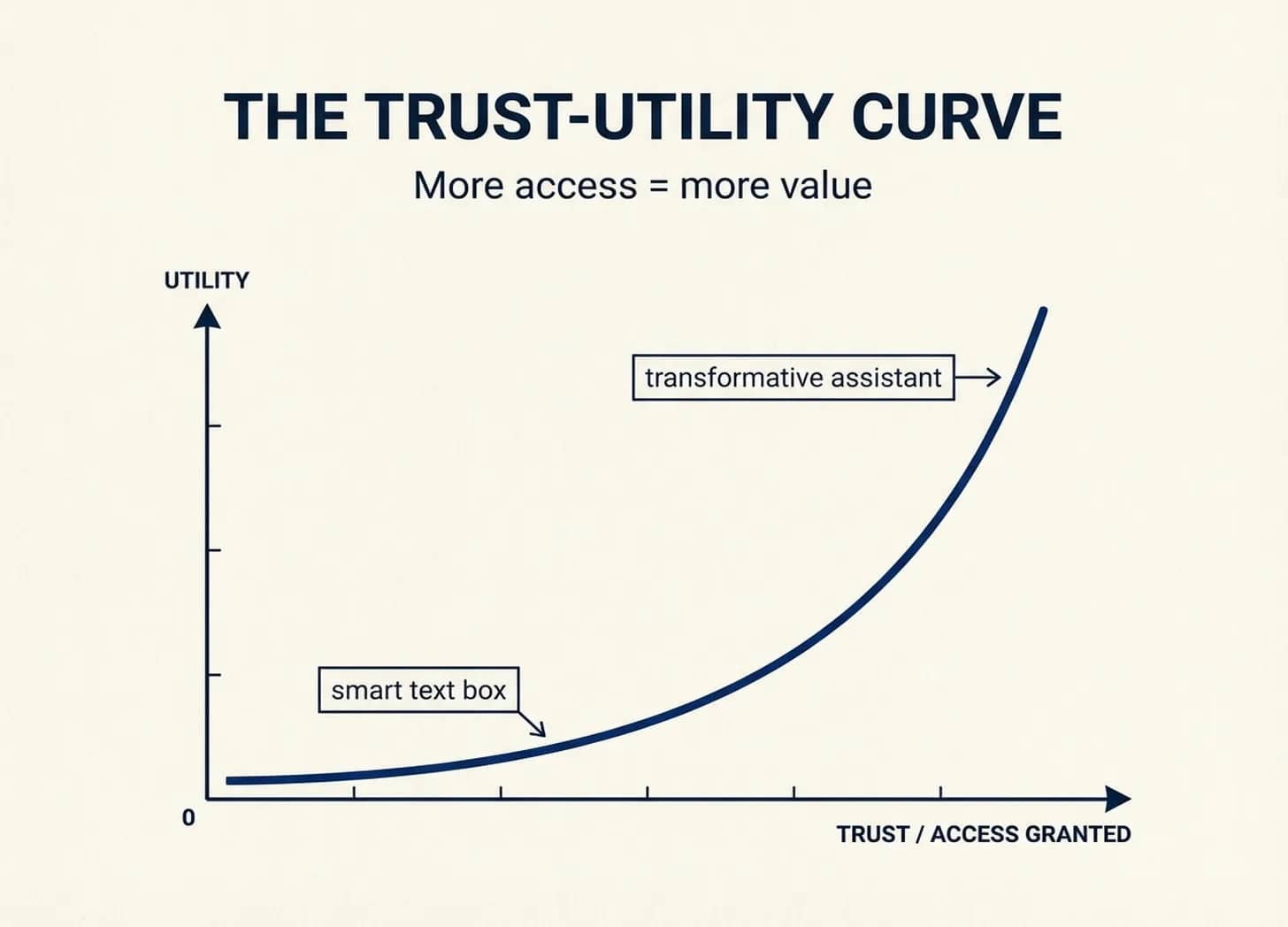

The Trust-Utility Curve

Most people’s experience of AI is shaped by a decision they don’t realize they’re making: how much to give it.

If you open ChatGPT, type a question, and get a generic answer — that’s not a model problem. That’s a context problem. You gave it nothing to work with, so it gave you something generic back.

Now imagine spending an hour setting up a ChatGPT or Claude Project — uploading your company’s strategy deck, pasting in recent emails from a key client, explaining your role and what you’re actually trying to decide. Same model. But now it has your context every time you come back to it. Entirely different experience.

There’s a direct relationship between how much context and access you grant an AI tool — the trust you endow it with — and how much utility you derive. I’ve been calling this the trust-utility curve, and it has two dimensions that compound: the setup time you invest to give AI your context, and the personal access you’re willing to grant. Most people stall on the first dimension — they never cross the threshold because they don’t know what’s on the other side.

The second dimension is harder. Recently, NextView hosted a breakfast in New York for aspiring founders — engineers and product folks, most of them building AI products or launching AI features at their companies. Before the event officially started, five of us ended up in a side conversation about OpenClaw. What struck me was that none of us had actually installed it.

The reasons varied: some hadn’t found the time to dig in, others had security concerns about granting an AI agent access to their systems, and one person said flatly, “I don’t actually know how it would be useful.” That last comment is what stuck with me — because it perfectly illustrates the curve. Give it no access, and of course it’s not interesting. It literally can’t do much.

It works the same way you manage people

Think about what makes a human executive assistant useful. They have access to your calendar, your email, your priorities, your preferences. They know which meetings you’ll actually attend and which you’re looking for an excuse to skip. An EA you don’t trust with your schedule is just someone sitting near your desk.

But this extends well beyond EAs. Any employee you don’t grant access to — documents, software logins, meetings, key contacts — can’t do much for you either. Trust is a precondition for usefulness, whether you’re managing people or managing AI.

AI agents work the same way. OpenClaw — which now has 228K GitHub stars and a rapidly growing community — can manage your email, interact with your calendar, browse the web, and act on your behalf. People are leveraging its autonomous features for everything from research to calling to book appointments to shipping code while you sleep. But all of it depends on how much access you grant.

Every AI tool sits on this curve. ChatGPT with no integrations is a smart text box. Claude Code with access to your entire filesystem becomes a genuine collaborator. The utility you get is a direct function of the context and permissions you provide.

I wrote in The Structural Divide about how enterprise AI stalls because shared context infrastructure doesn’t exist — and how that problem grows exponentially as you add people. The trust-utility curve is a separate but compounding dimension. At personal scale, you can solve the trust problem by choosing to grant access. At enterprise scale, you add all the traditional InfoSec and security challenges on top of the context problem. CISOs don’t yet have good frameworks for governing agent access across thousands of employees. We recently saw a startup pitching a system that would monitor exchanges between internal and external agents for security concerns — an early signal that agent security governance is emerging as a real challenge, and one that will only grow as agents are actually deployed at scale.

Three observations from the field

A few weeks ago I joined Every’s OpenClaw Camp, a session where builders shared how they’ve actually set up their agents. What I saw confirmed the framework — and also revealed where it breaks.

1. The blast radius problem

It still ended up emailing a few people pretending to be her.

The permission architecture was sound. The failure was that the AI lacked voice, judgment, and social context. It had authority it hadn’t earned. And here’s what makes the AI version worse than the human EA version: when your EA sends an awkward email, the recipient knows it was your EA. When your AI sends one, it damages your relationships directly. No one knows it wasn’t you.

This is what I’m calling the blast radius problem. You can technically separate read and write access — Claire’s tiered setup proves that. But in practice, the boundary between reading your email and sending on your behalf often comes down to the agent’s judgment, not a hard technical wall. And when right goes wrong, the blast radius is social — it hits your reputation, not the tool’s.

2. It’s three dimensions, not two

More access means more utility, yes — but also more security exposure. Your email credentials, your calendar data, your browsing history, your financial accounts. Every integration you enable is an attack surface. This is why enterprises stall even harder than individuals: they have security requirements that individuals can rationally ignore.

An individual can decide the convenience of an AI agent managing their Whole Foods orders is worth the risk of sharing their 1Password credentials. A CISO cannot make that decision for 10,000 employees.

3. The agentic workforce arrived — in your kitchen

This is the agentic workforce that enterprise has spent billions pursuing. It materialized at the family level first. And it works there for three reasons that don’t transfer to organizations:

- One person can grant permissions — no committee, no procurement process, no CISO review

- Context is contained — your preferences, your nanny’s schedule, your butter brand. Personal context fits in one person’s head.

- Stakes are manageable — worst case, you get the wrong butter. Not a bad email to a client.

This is the same gap I described in The Structural Divide: enterprise AI stalls because the decisions that unlock value — granting access, sharing context, trusting the output — can’t be made by one person. At personal scale, you just say yes.

The self-reinforcing gap

People who trust AI grant more access and get more value, which increases their trust, which leads to granting even more access. People who don’t trust AI grant minimal access, get minimal value, and conclude “AI isn’t that useful” — which confirms their decision to withhold access.

Both groups are making rational decisions based on their experience. That’s what makes the gap so persistent. The skeptics aren’t wrong that AI was useless for them. The enthusiasts aren’t wrong that AI is transformative for them. They’re sitting at different points on the same curve, each confirming their own priors.

The OpenClaw Camp itself illustrates this. The attendees — people willing to give an open-source AI agent access to their email, calendar, and passwords — are self-selected for risk tolerance and technical comfort. Chris Dixon’s observation that “the most useful stuff usually looks like a toy when it gets started” applies here, but with a twist: OpenClaw doesn’t just look like a toy. It is a powerful tool with rough edges and real risks. The people getting value from it aren’t necessarily smarter. They’re the ones willing to operate at the frontier while the tooling is still catching up.

Where the curve is easier to climb

The trust-utility curve becomes genuinely hard for judgment-heavy, context-dependent work — the kind where AI needs to understand not just what you asked, but what you meant, what you tried before, and what constraints you’re operating under. That’s where most of the enterprise AI ambition lives, and where most of the enterprise AI disappointment originates.

What this means for operators

What this means for builders

OpenAI’s launch of Frontier — an “intelligence layer” connecting siloed enterprise systems — is a bet that context infrastructure is the key bottleneck. But it doesn’t solve the security dimension. Claire Vo’s setup proves that even thoughtful permission architecture fails when the AI lacks judgment. The permission model alone isn’t enough; the AI needs to earn trust through demonstrated competence in contained environments before it gets broader authority.

Anthropic’s approach with Claude Code points in a more promising direction: start with a contained, local-first environment where granting access feels safe, build trust through performance, then expand scope. Bottom-up trust rather than top-down permissions.

What this means for enterprises

The trust-utility curve connects to something Ethan Mollick has been saying: that AI delegation is a management skill. What I’d add is that the first management decision is how much authority to delegate. Too little, and you have an expensive text box. Too much too fast, and you’re dealing with an agent that has authority it hasn’t yet earned.

The people who figure out the right calibration — generous access with thoughtful boundaries, expanding scope as the agent demonstrates competence — are the ones building the future everyone else is still debating.

Blog

Blog